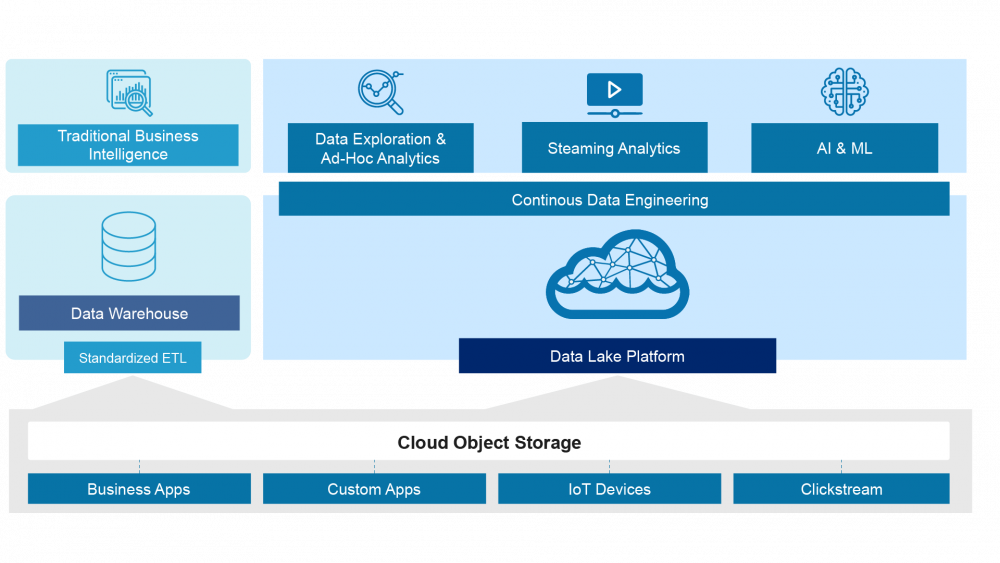

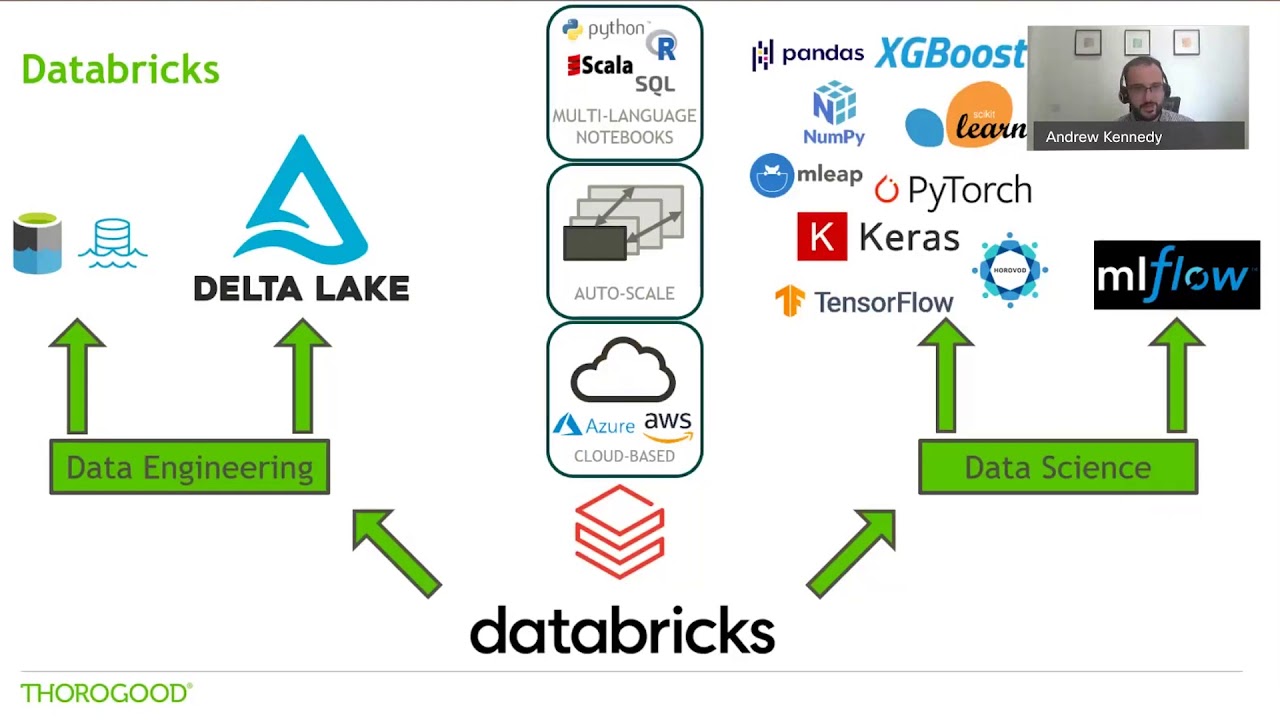

It always lags behind the included databases and only has old data stamps (the only exception is some calculated indicators that require fresh updating). The data warehouse rarely contains freshly updated data. Dozens of different parameters, some of which are quite complicated, can be tracked and instantly retrieved from the outside for analysis purposes. For example, this could be indicators such as the PNL of a particular customer group over the entire business history represented by a graph. However, the primary purpose of data warehouses is to store meta information. While the database stores current information – “what’s happening here and now” – the data warehouse can store other historical slices of the same database. The data that is not relevant for a particular case gets discarded.ĭata warehouses often combine relational data sets from multiple sources, such as user preferences, business reports, and transactional data to aggregate historical information. It integrates relevant data from internal and external sources like ERP and CRM systems, websites, social media, and mobile applications.īefore the data is loaded into the warehousing storage, it should be transformed and cleansed so it can be used for analysis. Data scientists are able to leverage an elastic, unified analytics platform for data and applications on-premises, across the edge, and throughout public clouds, enabling them to accelerate AI and ML workflows.A data warehouse (often abbreviated as DWH or DW) is a structured repository of data collected and filtered for specific tasks. It includes a scale-up data lakehouse platform optimized for Apache Spark that is deployed on premises. Its flexibility and scale can accommodate large enterprises data sets, or lakehouses, so customers have the elasticity they need for advanced analytics, everywhere.Īvailable on the HPE GreenLake edge-to-cloud platform, this unified data experience allows teams to securely connect to data where it resides today without disrupting existing data access patterns. Instead of requiring all an organization’s data to be stored in a public cloud, HPE Ezmeral Unified Analytics is optimized for on-premises and hybrid deployments and uses open source software to ensure as-needed data portability. Built from the ground up to be open and hybrid, its 100% open source stack frees organizations from vendor lock-in for their data platform. The service modernizes legacy data and applications to optimize data-intensive workloads from edge to cloud to deliver the scale and elasticity required for advanced analytics. HPE Ezmeral Unified Analytics is the first cloud-native solution to bring Kubernetes-based Apache Spark analytics and the simplicity of unified data lakehouses using Delta Lake on-premises. Any changes that result from a transaction are stored permanently. This makes it possible for multiple parties to read and write from the same system at the same time without them interfering with one another.ĭurability ensures that changes made to the data in a system persist once a transaction is complete, even if there is a system failure. Isolation guarantees that no transaction can be affected by any other transaction in the system until it is completed. It ensures all data is valid according to predefined rules, maintaining integrity of the data. This helps prevent data loss or corruption in case of an interruption in a process.Ĭonsistency makes sure that transactions take place in predictable, consistent ways. The architecture also enables multiple parties to concurrently read and write data within the system because it supports database transactions that comply with ACID (atomicity, consistency, isolation, and durability) principles, detailed below:Ītomicity means that when processing transactions, either the entire transaction succeeds or none of it does.

In this way, processing and analysis can be done with higher performance and lower cost.

This architecture provides the economics of a data lake, but because any type of processing engine can read this data, organizations have the flexibility to make the prepared data available for analysis by a variety of systems. The data can then be used by both BI applications as well as AI and ML tools. The processing layer is then able to query the data in the storage layer directly using a variety of tools without requiring the data to be loaded into a data warehouse or transformed into a proprietary format. The lakehouse platform manages the ingest of data into the storage layer (i.e., the data lake). At a high level, there are two primary layers to the data lakehouse architecture.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed